Key Takeaway: Most small-business websites are invisible to AI search platforms like ChatGPT, Claude, and Gemini. The reason usually isn't bad content. It's technical: your website can't be read by AI crawlers, you've got no structured data, or your content doesn't answer the questions AI is looking for. An AI visibility scanner can tell you exactly where the problems are.

You've done the work. You've got a website. Maybe you've even paid someone to do SEO on it. Your Google ranking looks reasonable. Business ticks along.

Then someone tells you to ask ChatGPT about your own business. You type in "best [your service] in [your town]", and your name isn't there. Your competitor's is. Or worse, the AI recommends businesses from 30 miles away and acts like yours doesn't exist.

It's a gut punch. And it's happening to nearly every small business in the UK right now.

ChatGPT currently recommends just 1.2% of all local business locations [1]. That's from an analysis of over 350,000 locations. 98.8% are invisible. Not ranked low. Not on page two. Completely absent from the AI's awareness.

And with 51% of UK adults now using AI search tools to find products, services and advice [2], this isn't a future problem. It's a right-now problem.

So why is it happening And more importantly, what can you do about it

Reason 1: AI Crawlers Can't Read Your Website

This is the biggest cause of AI invisibility for small businesses, and it's the one nobody talks about because it requires technical knowledge to spot.

AI platforms send automated bots, called crawlers, to visit your website and read its content. Each platform has its own:

GPTBot is OpenAI's crawler. It reads your site to train ChatGPT and power ChatGPT Search.

ClaudeBot is Anthropic's Claude crawler.

PerplexityBot crawls sites to surface them in Perplexity's search results. Unlike the others, it's not used for model training, only for live search [3].

Google-Extended is Google's control for whether your content feeds into Gemini.

These crawlers work differently from the Googlebot you're familiar with. And here's the critical part: 69% of AI crawlers cannot execute JavaScript [4].

If your website is built using a JavaScript framework that renders content in the browser (developers call this "client-side rendering" or CSR), then nearly seven out of ten AI crawlers visit your site and see nothing. A blank page. Empty HTML. Your content only appears after the JavaScript runs in a human's browser. The AI crawler doesn't have a browser. It just reads the raw code. And the raw code is empty.

35% of mobile websites don't have their main content statically discoverable in the source code [5]. That means over a third of sites on the web are partially or fully invisible to AI systems.

Research from Onely found that even Google takes nine times longer to crawl JavaScript-rendered content compared to plain HTML pages [6]. Google has the resources to process JavaScript eventually. Most AI crawlers don't bother.

This is why server-side rendering (SSR (Server-Side Rendering)) is so important for AI visibility. An SSR (Server-Side Rendering) website sends the full, finished HTML to the crawler. The content is right there in the source code before any JavaScript runs. Every AI crawler can read it. Immediately. No waiting, no rendering, no blank pages.

How to check: Right-click on your website and select "View Page Source." If you can see your actual content, your headings, your paragraphs, your business information in that source code, you're server-side rendered. If you see mostly JavaScript code, empty <div> tags, and references to .js files where your content should be, you've got a client-side rendering problem.

Reason 2: Your Robots.txt Is Blocking AI Crawlers

Before any crawler reads your content, it checks a file called robots.txt at the root of your website (yoursite.com/robots.txt). This file tells crawlers what they're allowed to access.

5.89% of all websites block GPTBot via their robots.txt [7]. That might sound small, but it's based on an analysis of 140 million websites. A larger dataset of 461 million robots.txt files indicates that the GPTBot block rate is 7.3% [7].

Here's the thing: most small business owners don't realise they might be blocking AI crawlers without realising it. Some hosting platforms and website builders set default robots.txt rules that block certain bots. Some security plugins do it. Some developers add broad blocking rules during development and forget to remove them.

If your robots.txt blocks GPTBot, ChatGPT will never see your content. If it blocks ClaudeBot, Claude won't know you exist. If it blocks PerplexityBot, you won't appear in Perplexity's search results.

How to check: Go to yoursite.com/robots.txt in your browser. Look for lines that say" User-agent: GPTBo" t followed by" Disallow: "/. That means GPTBot is blocked from your entire site. Do the same check for ClaudeBot, PerplexityBot, and Google-Extended.

How to fix it: You or your developer needs to add explicit Allow: / rules for each AI crawler. Adam Clarke's SEO 2025 recommends allowing GPTBot, CCBot, Google-Extended, PerplexityBot, ClaudeBot, and GrokBot at a minimum (Source: clarke_seo2025-robots-001).

Reason 3: You've Got No Structured Data

Structured data, often called schema markup, is machine-readable code that tells search engines and AI systems what your content is about. It's the translation layer between human-readable web pages and machine-readable information.

Only 12.4% of all domains use any structured data at all [8]. That's 45 million out of 362.3 million registered domains. The other 87.6% are asking AI systems to figure out their content from context alone. And AI systems aren't great at that.

In a controlled test, Search Engine Land compared three nearly identical pages. One had strong schema markup. One had a poor schema. One had none. Only the page with a well-implemented schema appeared in Google's AI Overview [9]. The other two were excluded despite having nearly identical content.

That test tells you everything. Same words on the page. Same topic. Same quality. The only difference was the structured data. The AI could understand the page with schema. It couldn't properly parse the pages without it.

For small businesses, the most important schema types are:

LocalBusiness schema tells AI exactly who you are, where you are, what you do, and how to contact you. It's the foundation of your digital identity. The

FAQPage schema flags your FAQ sections as structured question-and-answer pairs that AI can extract directly into its responses.

HowTo schema turns your step-by-step guides into numbered arrays that AI assistants can serve natively.

How to check: Go to Google's Rich Results Test (search.google.com/test/rich-results) and enter your website URL. It'll tell you what structured data you have (if any) and whether it's valid. If the result is empty, you've got no schema markup.

Reason 4: Your Content Doesn't Answer the Questions AI Is Looking For

Even if your site is technically accessible and has structured data, the AI still needs to find content worth citing. This is where most small-business websites fall short.

AI systems don't read your website the way a human does. They're scanning for specific content patterns. Research from Scandolera's SEO, Generative Engine Optimisation (GEO), Answer Engine Optimisation (AEO), and AEO Survival Guide identifies what AI selects for (Source: scandolera_geo-selection-001):

Does the content answer a specific question in the first two sentences AI doesn't have time for build-up. It needs the answer immediately.

Are external sources cited with data, studies, or statistics AI trusts content that shows its working.

Is the content structured with clear headings and subheadings AI uses the heading hierarchy to navigate pages.

Are FAQ formats, lists, or tables used These create the discrete, extractable answer units that AI can pull directly into its responses.

Can each paragraph be read as a standalone response If a paragraph only makes sense in the context of the paragraphs around it, AI can't extract it cleanly.

BrightEdge research illustrates this perfectly. LinkedIn, as a domain, performs 41.7 times better than average for AI citations. But 98% of LinkedIn content gets zero AI visibility [10]. The 2% that breaks through is structured educational content: Learning courses and Pulse articles. Social posts, company updates, and thought leadership pieces are completely ignored [10].

The pattern is clear. AI values structure and substance. It ignores marketing fluff, opinion pieces, and content that doesn't directly answer a question.

For small businesses, this usually means rewriting key pages so the answer comes first, not after three paragraphs of company history. It means adding FAQ sections with clear question-and-answer pairs. And it means including cited data where possible, because AI treats claims with sources as more trustworthy than those without.

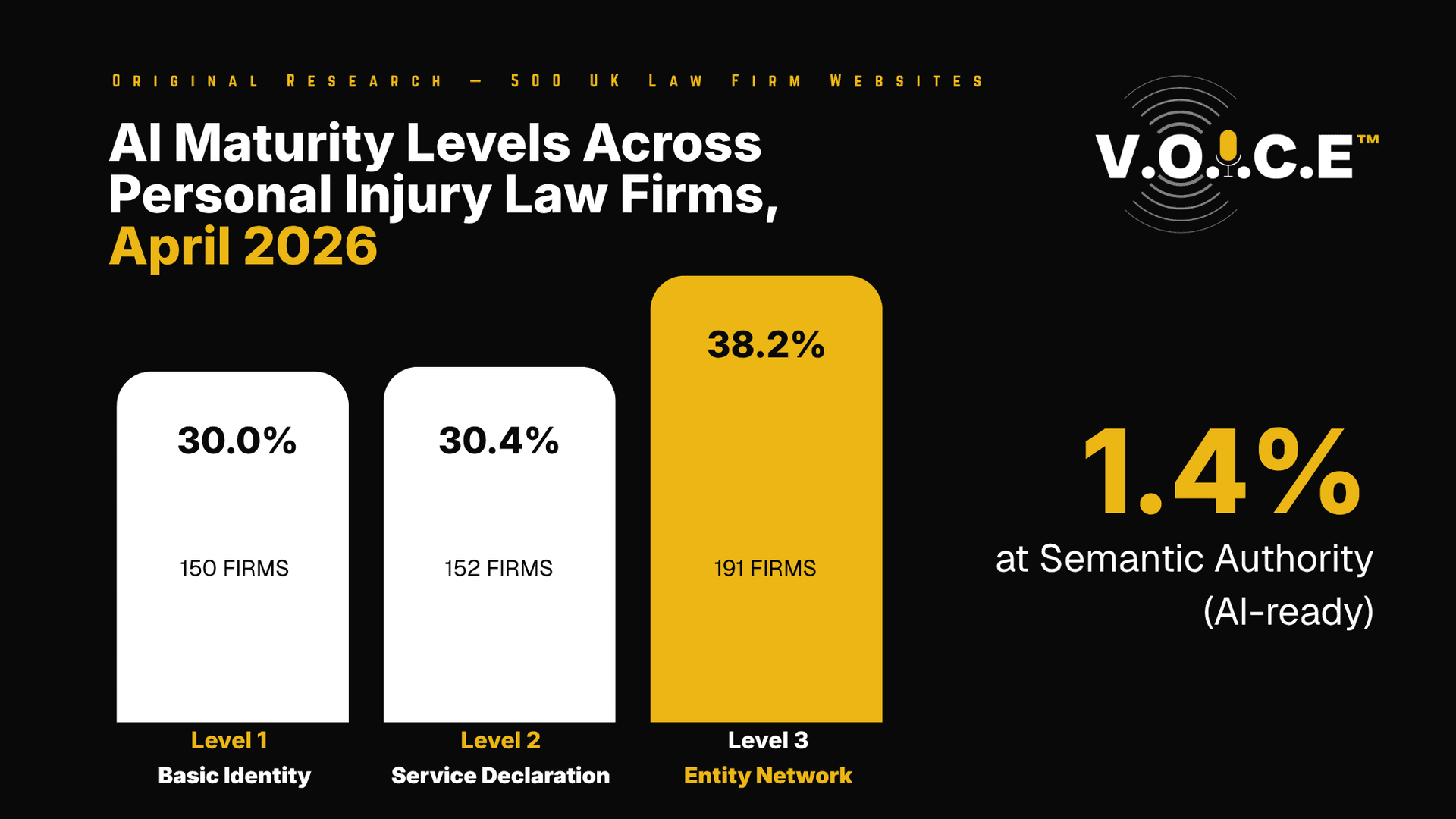

Reason 5: Your Business Doesn't Have Strong Enough Entity Signals

This one's less obvious but equally important. AI systems build their understanding of the world through "entities," which are recognised things: people, businesses, places, concepts. Google's Knowledge Graph stores millions of these entities and their relationships (Source: jones_entity-kg-001).

For AI to recommend your business, it needs to recognise your business as a thing that exists. Not just a website with some text on it. An entity with a clear identity, consistent information, and third-party validation.

Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) is the primary filter for what gets cited in AI answers [11]. AI Overviews use E-E-A-T signals and the Knowledge Graph to determine which sources are sufficiently authoritative to recommend.

And here's a finding that changes the traditional SEO playbook: web mentions now correlate with AI visibility three times as strongly as backlinks [12]. In traditional SEO, backlinks were king. For AI visibility, being talked about across the web matters more than being linked to. The correlation for web mentions is 0.664 compared to just 0.218 for backlinks [12].

ChatGPT Search pulls 58% of its local search sources from business websites, 27% from business mentions on other sites, and 15% from online directories [13]. That tells you the AI is looking at your website first, but it's cross-referencing what the rest of the web says about you.

For local businesses, this means:

Your Google Business Profile needs to be accurate and complete. AI pulls review data and business information from there.

Your business name, address, and phone number (NAP (Name, Address, Phone)) need to be consistent across every directory, listing, and profile. Inconsistency confuses the AI about whether these are all the same business.

You need to be mentioned on other websites. Reviews, local press, industry directories, forum posts. Each mention is a signal to the AI that your business is real and active.

You need to create content that demonstrates real expertise. Not generic blog posts. Content that shows you know what you're talking about, backed by experience and evidence.

Reason 6: Your Website Is Too Slow

This one's less dramatic than the rendering issue, but still matters. Pages with severely poor Core Web Vitals performance, particularly slow Largest Contentful Paint (LCP), are less likely to perform well in AI contexts [14].

The research is clear that site speed isn't an AI visibility growth lever. Being fast doesn't give you an advantage. But being severely slow creates a real disadvantage [14]. Pages that load slowly have higher abandonment rates, lower engagement, and weaker behavioural signals. All of which feed back into how AI systems assess content quality.

The thresholds to aim for: LCP under 2.5 seconds, Time to First Byte under 200ms, and Cumulative Layout Shift under 0.1 (Source: vale_aeo-performance-001).

How to check: Run your site through Google PageSpeed Insights (PageSpeed.web.dev). It'll give you a performance score and flag specific issues.

What to Do Next: A Practical Checklist

If you've read this far and you're worried, good. That means you're taking it seriously. Here's a prioritised checklist based on everything above.

Immediate (Today):

Run an AI visibility checker to see where you currently stand. Free tools like Am I Visible on AI (amivisibleonai.com) take 30 seconds and cover four AI platforms. Ask ChatGPT, Claude and Perplexity directly about your business. Record the results.

This week:

Check your robots.txt for AI crawler blocks. Check your page source for client-side rendering issues. Run Google's Rich Results Test to see if you have any structured data. Run PageSpeed Insights to check your Core Web Vitals.

This month:

If your site blocks AI crawlers, update the robots.txt file. If you have no structured data, implement the LocalBusiness schema at a minimum. Create or restructure your FAQ page with clear question-and-answer pairs. Review your Google Business Profile for accuracy and completeness.

This quarter:

If your site uses client-side rendering, plan a migration to server-side rendering. This is the biggest single fix for AI invisibility, but also the most involved. Implement FAQPage and HowTo schema markup. Create an llms.txt file for your site. Build an ongoing content plan that answers the specific questions your target customers are asking AI.

If you don't know where to start or you want a professional assessment, the V.O.I.C.E. scan at scopesite.co.uk/voice is designed for exactly this. It's a free Pro scan worth £300 that identifies every gap between where you are and where you need to be for AI visibility across SEO and Answer Engine Optimisation (AEO).

FAQ - Frequently Asked Questions

Why does my competitor show up on ChatGPT but I don't

The most likely explanation is technical, not content quality. Your competitor's website might be server-side rendered (making it readable by AI crawlers), have structured data markup (helping AI understand the content), or have stronger entity signals across the web (reviews, mentions, consistent directory listings). Run an AI visibility checker on both your site and theirs to see the specific differences.

Can I fix AI visibility myself, or do I need a developer

Some fixes are straightforward enough to do yourself. Checking and updating your robots.txt, improving your Google Business Profile, and restructuring content to answer questions directly are all doable without technical help. But moving from client-side to server-side rendering, implementing schema markup, and creating an llms.txt file typically requires a developer or an agency with AI visibility experience.

How long does it take to become visible to AI search

It depends on what's broken. Fixing a robots.txt block can take effect within days as crawlers revisit. Implementing schema markup can show results within weeks as AI systems reindex your content. A full site migration from CSR to SSR (Server-Side Rendering) typically takes 1 to months, depending on complexity. Building entity signals through reviews, mentions, and content is an ongoing process that compounds over time.

Does traditional SEO still matter if AI search is taking over

Absolutely. SEO is the foundation. Your site still needs to rank on Google, load quickly, and be technically sound. But SEO alone doesn't guarantee AI visibility. Think of SEO as the minimum requirement. Answer Engine Optimisation (AEO) (Answer Engine Optimisation) and Generative Engine Optimisation (GEO) are the layers you build on top to make sure AI platforms can find, understand, and recommend your business. We've covered the difference between all three in our Generative Engine Optimisation (GEO) vs SEO vs Answer Engine Optimisation (AEO) guide.

What is the single most important thing I can do right now

Run an AI visibility check. Go to amivisibleonai.com or use the free V.O.I.C.E. scan at scopesite.co.uk/voice. You can't fix what you can't measure. The check takes under a minute and tells you whether AI crawlers can access your site, whether you have structured data, and whether your content is structured for AI extraction. That gives you a clear starting point.

Sources

1. SOCi via National Law Review, "AI search recommends only 1.2% of local businesses" (March 2026) - natlawreview.com

2. Which "Consumer use and attitudes towards AI search tools" (December 2025) - which.co.uk

3. Anthropic, OpenAI, Perplexity, Google, "AI crawler documentation" (March 2026) - privacy.claude.com

4. SearchVIU, "AI crawlers and JavaScript rendering" (November 2025) - searchviu.com

5. The Web Almanack by HTTP Archive, "Performance" (November 2024) - almanac.httparchive.org

6. Onely, "Google needs 9x more time to crawl JS than HTML" (November 2022) - onely.com

7. Ahrefs, "AI bot block rates" (May 2025) - ahrefs.com

8. Epic Notion / Web Data Commons, "The local business schema advantage" (April 2025) - epicnotion.com

9. Search Engine Land, "Schema, AI Overviews and structured data visibility" (September 2025) - searchengineland.com

10. BrightEdge, "LinkedIn Learning and Pulse articles emerge as top AI citation sources" (October 2025) - brightedge.com

11. Google Search Central, "Google Search and AI content" (February 2023, current position) - developers.google.com

12. Adrien Thomas via LinkedIn, "Domain authority vs AI citation" (February 2026) - linkedin.com

13. BrightLocal, "Uncovering ChatGPT Search sources" (December 2024) - brightlocal.com

14. Search Engine Land, "Core Web Vitals and AI search visibility analysis" (January 2026) - searchengineland.com

Studios is based in Beckington, Frome, Somerset, BA11. We build AI-visible websites and implement the V.O.I.C.E. methodology for small businesses across Frome, Trowbridge, Warminster, Shepton Mallet, Westbury, Somerset and Wiltshire. Find out why AI can't" see your business with a free V.O.I.C.E. scan at voice.scopesite.co.uk