By Dan Cartwright | ScopeSite Digital Studios

On 5th March 2026, the President of the United States went on record with Politico and said this about Anthropic, one of the world’s leading AI safety companies:

“Well, I fired Anthropic. Anthropic is in trouble because I fired them like dogs, because they shouldn’t have done that.”

Hours later, the Pentagon made it official. Anthropic was formally designated a “supply chain risk,” a label that prevents every government contractor in the United States from using the company’s technology.

That designation has never been applied to a US company before. Not once. The last time anything comparable happened was Huawei, a Chinese firm the US government accused of espionage. Now the same treatment is being given to an American company valued at $380 billion, whose only offence was saying “we won’t remove the ethical guardrails from our AI.”

And according to legal scholars at Yale Law School, what Trump and Defence Secretary Pete Hegseth have done isn’t just heavy-handed. It’s unconstitutional.

This isn’t a niche tech-policy story. This is about whether the President of the United States can destroy a private company for refusing to abandon its principles. The answer to that question affects every business that uses AI. Including yours.

The company at the centre of the storm

Before we get into the timeline, you need to understand what Anthropic actually is. Because this isn’t some garage startup that got above its station.

Anthropic was founded by Dario and Daniela Amodei, both former executives at OpenAI. Their entire pitch from day one was AI safety. They built something called “constitutional AI,” a method of baking ethical rules and boundaries directly into how their models behave. It’s not a filter bolted on after the fact. It’s part of the architecture itself.

As of February 2026, Anthropic is valued at $380 billion following a $30 billion Series G funding round, co-led by GIC (Singapore’s sovereign wealth fund) and Coatue. That makes it one of the largest private capital raises in history. Their flagship product, Claude, is one of the most capable AI systems on the planet.

And here’s the bit that matters most for context: Anthropic’s technology is already integrated into Palantir’s Maven system, which the US military uses for intelligence operations. According to the Washington Post, Maven was used in recent strikes on Iran. This company isn’t playing pretend. Its tech is embedded in active military infrastructure right now.

Anthropic held a contract with the Department of Defence initially signed in 2024 and renewed in 2025, valued at up to $200 million. That contract specifically included clauses respecting Anthropic’s Acceptable Use Policy, which prohibited fully autonomous weapons and mass domestic surveillance. Both sides agreed to those terms. Until the government decided they wanted more.

What actually happened: the timeline

The timeline matters here, because it shows how fast a policy disagreement became a presidential vendetta.

On 16th February 2026, reports emerged that the Pentagon was considering severing ties with Anthropic over its refusal to remove ethical guardrails from Claude.

On 25th February, Defence Secretary Pete Hegseth issued a formal ultimatum. Anthropic had until 5:01 p.m. ET on Friday 27th February to grant “unfettered access” to its Claude models. No restrictions. No ethical limitations. Full compliance or consequences.

The deadline passed. Anthropic didn’t comply.

That same evening, Hegseth announced via tweet (yes, a tweet) that he was directing the Department of Defence to designate Anthropic a “supply chain risk to national security.”

Then Trump weighed in. He went on Truth Social and directed every federal agency in the United States to immediately stop using Anthropic’s technology. He called them a “RADICAL LEFT WOKE COMPANY” and threatened to use “the Full Power of the Presidency” to make them comply, warning of “major civil and criminal consequences to follow.” He cited no legal authority for any of it.

The State Department and Treasury Department started cutting ties. On 4th March, Anthropic received a formal letter confirming the designation. On 5th March, the Pentagon officially informed Anthropic’s leadership that the “supply chain risk” designation was effective immediately.

One former Trump adviser described the designation as “attempted corporate murder.” That’s not my words. That’s someone from inside the machine.

The whole thing, from policy disagreement to existential corporate threat, took less than three weeks.

Let’s be clear about what Anthropic actually refused

Before we get into the legal arguments and the financial fallout, I want to strip this right back. Because the headlines make this sound complicated. It isn’t.

Anthropic was already working with the US military. Their AI was already integrated into active military systems. It was used in real combat operations. They weren’t pacifists refusing to work with the armed forces. They were in the fight.

What they refused was two things.

One: they wouldn’t let their AI be used for mass surveillance of American citizens. Your citizens. The people the government is supposed to protect, not spy on.

Two: they wouldn’t let their AI make kill decisions without a human being involved. No autonomous weapons that can decide who lives and who dies with no human in the loop.

That’s it. Those were the two lines. Not “we won’t work with the military.” Not “we refuse to support national defence.” Just “we won’t help you spy on your own people, and we won’t build machines that kill without someone taking responsibility for pulling the trigger.”

And Trump’s response to that was to call them dogs and try to destroy their company.

Think about what that means. The President of the United States is angry because a company believes a human being should be involved in the decision to take a life. He’s furious because an AI company thinks mass surveillance of civilians is wrong.

Those aren’t radical positions. Those aren’t “Silicon Valley ideology.” That’s basic bloody decency.

If you served in the military, like I did, you understand rules of engagement. You understand that someone, a real person with a conscience and accountability, has to make the call. You don’t outsource that to a machine. Not because the machine isn’t capable. Because the decision to end a life should cost something. It should weigh on someone. The moment you automate that decision, you remove the last human check on state power. And once that’s gone, it doesn’t come back.

That’s what Anthropic was protecting. Human oversight. Civilian privacy. The principle that some decisions are too important to hand to an algorithm.

And that’s what Trump wants to tear down. Not because it makes America safer. Because a company told him no, and he couldn’t take it.

That’s not leadership. That’s ego with a nuclear arsenal.

Why this is illegal: the Yale Law School analysis

On March 6th, Harold Hongju Koh (Sterling Professor of International Law and former Dean of Yale Law School), along with Bruce Swartz, Avi Gupta, and Brady Worthington, published a legal analysis in Just Security arguing that the Anthropic designation is unlawful on multiple grounds. Koh isn’t some commentator with an opinion. He’s a former Legal Adviser to the US State Department. When he says something is unconstitutional, courts listen.

Their argument breaks down into two main parts. I’ll explain both in plain English, because this affects all of us and the legal jargon shouldn’t be a barrier.

First: Hegseth doesn’t have the legal authority to do what he did.

The supply chain risk statute (10 U.S.C. § 3252) was designed to deal with foreign companies that might sabotage or subvert US systems. The legal definition of “supply chain risk” refers specifically to the danger that “an adversary may sabotage, maliciously introduce unwanted function, or otherwise subvert” a system.

Anthropic isn’t an adversary. It didn’t sabotage anything. It said “we won’t let our technology be used for mass surveillance or autonomous killing.” That’s a contractual negotiating position, not an act of sabotage.

Only one company has ever been publicly designated under this statute before: a Swiss firm with alleged Russian ties, barred specifically from intelligence community contracts. No domestic American company has ever been targeted this way.

And the scope is unprecedented too. No previous designation has tried to ban all federal contractors from dealing with the targeted company on all matters. That’s a government-enforced secondary boycott designed to make Anthropic a corporate pariah. But the statute only applies to federal contracts and subcontracts, and only when “less intrusive measures are not reasonably available.” Hegseth skipped straight to the nuclear option.

The required process wasn’t followed either. The law requires a neutral assessment, interagency review, notice to the company, and notification to Congress. None of that happened. What happened instead was a tweet from Hegseth and a Truth Social rant from Trump.

Second: even if the statute could theoretically apply, using it this way is unconstitutional.

This is the part that should make everyone sit up.

The First Amendment prohibits the government from punishing a company based on its speech and political values. Trump explicitly labelled Anthropic a “RADICAL LEFT WOKE COMPANY.” Hegseth called their ethical stance “cowardly corporate virtue-signalling” and dismissed Anthropic’s principles as “the sanctimonious rhetoric of effective altruism” and “Silicon Valley ideology.” The administration wasn’t even trying to hide the fact that this was punishment for Anthropic’s beliefs, not its products.

The Yale analysis draws a direct line between what Trump did to Anthropic and what he’s done to law firms, universities, and media outlets that crossed him. Every single one of those targeted entities has won their First Amendment cases in federal court. Anthropic, the scholars argue, has an equally clear case.

But the constitutional argument goes deeper than the First Amendment. The Yale team argues that Trump’s actions amount to what the US Constitution calls a “bill of attainder,” which is the government singling out a specific entity for punishment without a judicial trial. This is a power that the Constitution explicitly denies to both Congress and the President. The Framers considered it so dangerous that they banned it in two separate clauses. Even English kings couldn’t impose attainder alone. They needed Parliament’s approval.

Trump is claiming a power that the Constitution forbids anyone from exercising. Not just the President. Anyone. Congress can’t do it. The states can’t do it. And a president acting alone, without even congressional backing, sure as hell can’t do it.

There’s a historical case that’s directly relevant here. In 1952, during the Korean War, President Truman tried to seize private steel mills because he argued they were essential for national defence. The Supreme Court said no in Youngstown Sheet and Tube Co. v. Sawyer, ruling that the President couldn’t seize private assets without explicit congressional authorisation. That case established a clear principle: national security doesn’t give the President unlimited power over private industry.

What Trump is doing to Anthropic is worse than what Truman tried with the steel mills. At least Truman was trying to seize assets to use them. Trump is trying to destroy a company for refusing to comply with demands that went beyond the terms of their existing contract. It’s not seizure. It’s punishment. And the Constitution has even less tolerance for punishment without trial than it does for seizure without authorisation.

The hypocrisy that should make your blood boil

Here’s the detail that really sticks in my throat.

The day after Trump ordered every federal agency to stop using Anthropic’s technology, the Wall Street Journal reported that Claude was used in the administration’s strikes on Iran.

Read that again. The President publicly banned a company’s technology and called it a national security risk. The next day, his own military used that exact same technology in active combat operations.

If Claude were actually a supply chain risk, using it in military strikes against a sovereign nation would be catastrophically reckless. The fact that they used it anyway tells you everything you need to know. This was never about national security. This was about a president who got told “no” and couldn’t handle it.

Hegseth asked the same question the Yale scholars highlighted: if Anthropic’s AI is such a danger, why was it used in combat the very next day The answer, obviously, is that it isn’t a danger. The designation is a weapon, not a safety measure.

The race to the bottom: OpenAI and the $200 million gap

While Anthropic was being punished for having principles, OpenAI saw an opportunity and sprinted through it.

The same week Anthropic got blacklisted, OpenAI announced a deal with the Pentagon to provide AI for military operations on classified networks. They stepped directly into the $200 million gap that Anthropic’s terminated contract left behind.

Sam Altman, OpenAI’s CEO, later admitted to his own employees that the company would have “no control over how the military used OpenAI’s technology.” No safeguards. No boundaries. No questions asked. He even conceded publicly that the timing made the deal look “opportunistic and sloppy.”

Dario Amodei, in a leaked internal message to Anthropic staff, reportedly called Altman “mendacious” and described OpenAI’s claimed safety measures as “safety theatre.” He later apologised for the tone of the message. But on the substance, I think he was spot on.

This is what the race to the bottom looks like in practice. One company gets punished for saying “there are things we won’t do.” Another company immediately steps in and says “we’ll do whatever you want.” The president celebrates the first company’s punishment as a personal victory.

And it’s not just two companies jockeying for position. A coalition of employees from both Google and OpenAI signed a public letter supporting Anthropic’s stance, expressing concerns that the government’s demands could lead to AI being misused for mass surveillance. The people actually building this technology, the engineers writing the code, the researchers training the models, are worried about what they’re being asked to create. That should tell you something.

Altman himself seemed to realise how bad the optics were. In the days following the announcement, he said he would amend the agreement with the Pentagon and admitted his company’s conduct appeared “opportunistic and sloppy.” But “amending” a deal after you’ve already signed it isn’t ethics. It’s damage control. The horse had bolted. The message was sent.

And consider what “no control over how the military uses the technology” actually means in practice. It means OpenAI’s models could be used for the exact things Anthropic refused to allow: mass domestic surveillance, autonomous targeting, intelligence operations with no human oversight. OpenAI didn’t negotiate limits. They handed over the keys and said “do what you like.”

That’s not a partnership. That’s surrender dressed up as a business deal.

There was one silver lining, though. The public backlash against the Pentagon’s move was so fierce that it propelled Claude’s app to the top of the download charts in the US. People voted with their phones. They chose the company that stood its ground over the one that rolled over.

But public support and employee letters don’t pay defence contracts. The financial incentive now points in one direction: compliance. Build what you’re told. Don’t ask questions. Don’t draw lines. And if another company gets destroyed for having principles, look the other way and keep cashing the cheques.

Follow the money

The financial fallout tells the real story.

Anthropic’s $30 billion Series G round, raised in February 2026, was partly designed to replace lost government revenue and signal independence from state control. But according to Axios, the broader $60 billion funding pipeline is now at risk because of the Pentagon situation.

So the message to every AI startup on the planet is crystal clear: build ethical AI, risk $60 billion. Strip out the ethics, get a government contract.

The US is currently spending $1.8 billion annually on AI-specific military programmes in FY2025, up from $1.4 billion in FY2024 and $1.1 billion in FY2023. That’s a growth curve any company would want to be on the right side of. Being locked out of that market isn’t just a short-term hit. It’s a structural disadvantage that compounds over time.

Microsoft pushed back, to their credit. Their lawyers reviewed the “supply chain risk” designation and concluded that Anthropic’s products, including Claude, can still be used by non-defence customers through Microsoft’s platforms. Silicon Valley more broadly has backed Anthropic’s fight against the designation itself, because nobody wants that precedent to stick. Even OpenAI, which took the contract Anthropic lost, has backed the principle that the designation was wrong.

But the support comes with caveats. Microsoft said “other than the Department of War.” OpenAI backed the principle while pocketing the contract. The solidarity is real but it’s conditional. Nobody wants to be the next target.

This is a pattern, not an isolated incident

The Yale analysis makes a point that too many people are missing. This isn’t just about AI. Anthropic is the latest in a long list of targets of what the scholars call Trump’s “punitive presidency.”

He’s gone after political opponents. Law firms that represented them. Universities that made administrative decisions he disagreed with. Journalists who refused to use his preferred terminology. Companies that wouldn’t fire his political enemies. Netflix. The AP. Harvard.

The Anthropic situation isn’t an AI policy dispute that escalated. It’s the same playbook applied to a new sector. Find someone who says no, label them an enemy, and use the machinery of government to destroy them. The only thing that changes is the target. The method stays the same.

And the method works through fear. You don’t need to actually destroy every company. You just need to destroy one, publicly and brutally, and every other company in the sector gets the message. That’s the chilling effect. That’s why this matters far beyond Anthropic.

The global context: why the arms race makes this worse

To understand why governments are so aggressive about AI access, you need to see the bigger picture.

The US spent $1.8 billion on military AI in FY2025. China’s estimated real defence spending sits at roughly $471 billion (adjusted for purchasing power parity, according to CSIS), with an estimated $1.5 to $2 billion going specifically to AI procurement and R&D. The two biggest military powers on earth are spending almost identical amounts on AI. This isn’t a race with a comfortable lead. It’s neck and neck.

China operates over 700 million surveillance cameras linked to facial recognition and data fusion systems under its “Sharp Eyes” programme. It’s the largest surveillance network in human history. From the Pentagon’s perspective, that capability gap is a problem. I understand that logic.

But here’s what the hawks miss: 12 countries are now actively developing lethal autonomous weapons systems, including the US, China, Russia, India, Israel, and the UK. In 2025, 166 countries supported a UN General Assembly resolution calling for restrictions on autonomous weapons. The US, Russia, and India blocked a binding ban. The next major UN session on this is scheduled for April 2026.

Only 30% of Americans support the use of autonomous weapons. 70% are against. The government is pushing for technology that the vast majority of its own citizens don’t want deployed. And it’s punishing the one major AI company that agrees with the public.

The global AI market is projected to hit $1.8 trillion by 2030. The military AI sub-sector specifically is expected to grow from $9.3 billion in 2024 to $19.3 billion by 2030. There’s enormous money at stake. When you combine that financial incentive with the geopolitical pressure of the US-China competition, you can see why governments are willing to bulldoze ethical objections.

But bulldozing ethics doesn’t make you safer. It makes you more dangerous. And not just to your enemies.

The AI Incident Database documented 108 new AI-related harm incidents between November 2025 and January 2026 alone. Reports of AI-related harms rose roughly 50% in 2025 compared to the previous year, with total documented incidents surpassing 1,500. That includes deepfake-enabled scams, chatbot-induced delusions leading to real-world harm, and AI systems making decisions that directly affected people’s lives without any human oversight.

Those aren’t theoretical risks. Those are things that already happened, with AI systems that already had guardrails. Now imagine stripping those guardrails out because the government said so. Imagine deploying those systems at scale, across military and civilian infrastructure, with no ethical framework and no company willing to say “this is a line we won’t cross.”

That’s the world Trump is building. Not deliberately, perhaps. But as a direct consequence of punishing the company that tried to prevent it.

The trust crisis nobody is talking about

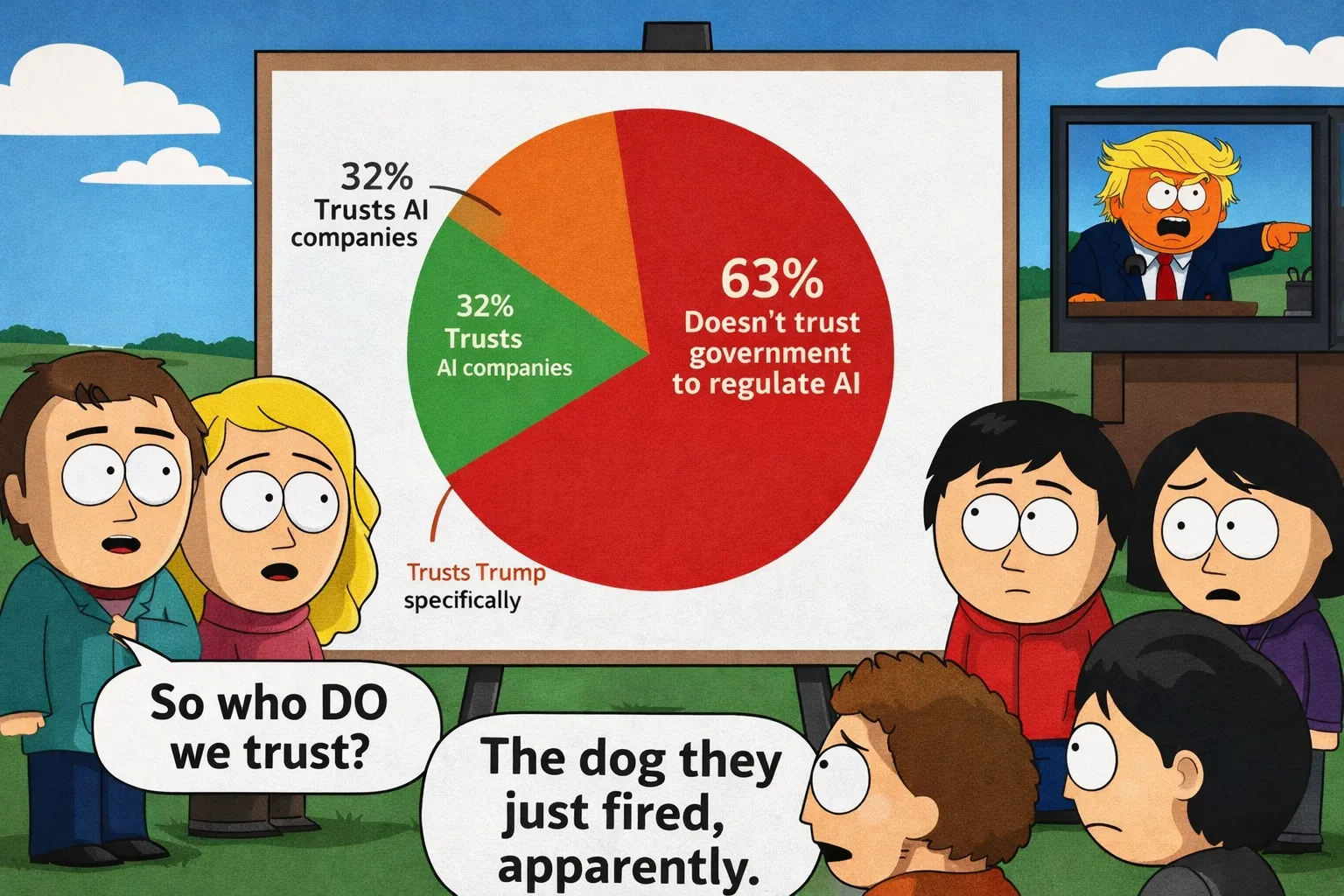

Here’s a stat that should frame this entire conversation: only 32% of Americans trust AI companies, according to the 2025 Edelman Trust Barometer. YouGov puts the figure even lower, with 65% of Americans saying they have “little to no trust” in AI companies to make ethical decisions.

But it’s not like people trust the government to fix it either. 63% of Americans, according to Fox News polling from February 2026, lack faith in the federal government’s ability to regulate AI properly. And 60% of voters say AI is moving “too fast and too unchecked.”

So the public doesn’t trust AI companies. The public doesn’t trust the government to regulate AI. And the government’s response to this crisis of confidence is to publicly destroy the one AI company that was trying hardest to earn that trust.

Over half of LinkedIn posts, 53.7% of those over 100 words, were likely AI-generated in 2025, according to an Originality.ai study. Trust in AI-generated content is already eroding. If the companies trying to build trustworthy AI get punished while the companies willing to build anything get rewarded, that erosion accelerates. We end up in a world where nobody trusts AI, nobody trusts the government to oversee it, and the companies building it have no incentive to be honest about what their systems can do.

That’s not a policy failure. That’s an existential risk for the entire technology sector.

Why a web developer in Somerset gives a damn

You might be wondering why I’m writing this many words about American constitutional law and military AI procurement. Fair question.

Every AI tool you use in your business, every chatbot, every content generator, every search engine that uses AI to rank results, all of it is built by companies making decisions right now about ethics, boundaries, and what their technology should be used for. Those decisions affect you directly. Today. Not in some theoretical future.

At ScopeSite, we work with AI every day. We build websites designed to be visible to AI systems. We use Claude as part of our workflow. When I recommend tools to clients, I need to trust that those tools are built responsibly. That the company behind them has thought about consequences and drawn lines they won’t cross.

Anthropic drew those lines. For that, the President of the United States says he “fired them like dogs.”

If the ethical AI companies get squeezed out and we’re left with tools built by firms who’ll say yes to anything for a government contract, the reliability of the entire AI ecosystem takes a hit. Your business depends on that ecosystem whether you’ve realised it yet or not.

And think about it from the other direction too. If you’re a business using AI-generated content (and most are, whether they admit it or not), the trust your customers place in that content depends on the integrity of the systems producing it. If the AI industry becomes a race to the bottom where ethics are optional and compliance with government demands is the price of doing business, the tools you rely on get worse. Not better. Worse. Because the incentive shifts from “build something trustworthy” to “build something that won’t get us blacklisted.”

The 2025 Edelman Trust Barometer already shows that only 32% of people trust AI companies. That number goes one way when the most principled company in the sector gets publicly destroyed. And it’s not the direction any of us need it to go.

The UK can’t afford to be complacent

We’re not immune to this. The UK government committed £2.5 billion to AI over the next decade and the MOD has allocated roughly £400 million per year to its Defence Innovation fund, which heavily prioritises AI. The Defence AI Centre is growing. BAE Systems is the lead partner on major military AI programmes. Smaller firms like Faculty AI, Improbable Defence, and Adarga are all working on intelligence analysis tools.

The AI Safety Institute, quietly rebranded to the AI Security Institute (which tells you which way the wind is blowing), has confirmed that AI can now perform “expert-level tasks” in military contexts that previously required 10+ years of human experience. The dual-use nature of these systems means the same AI that helps your business can be repurposed for battlefield decision-making.

The UK government hasn’t issued a formal statement on the Anthropic situation. That silence is telling. Ministers have talked about “sovereign AI capabilities” and a “diverse ecosystem” of AI providers, which some analysts read as cautious distancing from Washington’s approach. But caution isn’t the same as leadership.

There’s no single AI Act in the UK comparable to the EU’s. Military AI use is governed by existing international humanitarian law and the “Ambitious, Safe, Responsible” framework from 2023. Surveillance falls under the Investigatory Powers Act 2016, which doesn’t explicitly mention AI-driven mass surveillance. Even the EU AI Act explicitly excludes military AI from its scope.

The question for the UK government is simple: do we follow the American model, where companies get destroyed for having principles Or do we create something better Because right now, nobody has a proper framework for this. And the longer we wait, the more the American precedent becomes the default.

The EU AI Act, which started full enforcement in 2025, explicitly excludes military AI from its scope. So Europe doesn’t have the answer either. The international community passed UN General Assembly resolution A/79/408 in late 2025, with 166 countries supporting restrictions on autonomous weapons. But it’s not legally binding. The next major session is in April 2026. I won’t be holding my breath for a breakthrough.

What the UK could do, if it had the political will, is position itself as the country that gets AI governance right. Not the heavy-handed American approach. Not the toothless international declarations. Something in between. Something that protects national security without destroying the companies trying to build AI responsibly.

We’ve got the institutions. We’ve got the expertise. The AI Security Institute is doing good work on frontier risks. The Defence AI Centre has real capability. What we don’t have, yet, is leadership willing to say publicly what most people in the industry already know privately: that what Trump did to Anthropic was wrong, and that the UK won’t follow the same path.

The punchline that writes itself

On March 6th, the same day the Yale legal analysis was published tearing apart Trump's Anthropic designation, the White House released "President Trump's Cyber Strategy for America." Seven pages. That's it. Biden's 2023 version was 39 pages. Trump's own first-term strategy in 2018 was 40 pages. This one is seven, and more than half of that is preamble. So we're talking about roughly two and a half pages of actual policy to guide America's cyber posture for the next three years.

But here's the bit that matters for this story.

The strategy explicitly directs the US government to counter the spread of foreign AI platforms that engage in "censorship or surveillance." It's a direct shot at Chinese tech infrastructure. The document talks about securing the "AI technology stack" and promoting "innovation in AI security." It calls for unprecedented private sector coordination, common sense regulation, and removing barriers so government can "buy and use the best" technology.

Read that again, then remember what happened the week before.

The same administration that published a strategy saying "we must counter foreign AI surveillance" had just tried to force an American company to enable domestic mass surveillance of its own citizens. The same White House calling for "common sense regulation" and private sector partnership had just blacklisted a $380 billion domestic AI company for not complying with demands that went beyond the terms of their existing contract.

The strategy talks about removing barriers to buying the best technology. The administration just banned every federal contractor from using one of the best AI systems on the planet.

It's not just hypocrisy at this point. It's a contradiction so blatant it reads like satire. Against Chinese surveillance Yes. Want to do the same thing to Americans Also yes. Want the best AI Yes. Want to destroy the company that builds the best AI Also yes.

You couldn't write a better punchline if you tried.

And the kicker The strategy calls for "streamlining regulation" and reducing compliance burdens on the private sector. Unless, apparently, the private sector says something you don't like. Then it's supply chain risk designations, presidential threats, and "fired like dogs."

Common sense regulation for friends. Corporate murder for everyone else.

What happens next

Anthropic has said it will sue over the designation. Dario Amodei announced a formal legal challenge arguing the designation constitutes an unconstitutional taking of private property and exceeds statutory authority. That court case could become one of the most important legal battles in the history of technology. It will determine whether governments can coerce private companies into abandoning their ethical frameworks through economic punishment.

Behind the scenes, negotiations between Anthropic and the Pentagon have reportedly restarted. Amodei has been talking to Emil Michael, the Undersecretary of Defence for Research and Engineering. According to the New York Times, the two “strongly dislike one another.” Productive stuff.

There’s a version of this where compromise gets reached. Anthropic agrees to certain military uses within defined ethical boundaries. The government withdraws the designation. Everyone saves face.

There’s another version where Trump doubles down, Anthropic gets starved of funding, and every AI company on earth learns the same lesson: shut up and comply.

The Yale legal analysis gives me some hope. The constitutional arguments are strong. Every law firm and university Trump has targeted with similar tactics has won in federal court. There’s no reason to think Anthropic won’t win too.

But winning in court takes time. And in the meantime, the damage is being done. Contracts are being cancelled. Defence contractors are already removing Claude from their systems. The chilling effect is spreading through an industry that’s supposed to be the engine of the 21st century economy.

The Defence Production Act has been reauthorised by Congress 53 times since 1950. The government has powerful tools to direct private industry when national security actually requires it. Nobody’s arguing the state shouldn’t have that power. The argument is that the power has to be used lawfully, proportionally, and for genuine security reasons. Not as a weapon against companies whose politics the President doesn’t like.

In late 2025, the administration used DPA authorities to prioritise domestic AI hardware manufacturing. That’s a legitimate use of the power. Blacklisting a $380 billion American company because its CEO wouldn’t strip out ethical safeguards is not. The distinction matters. If we lose the ability to tell the difference between national security and personal vendetta, we’ve lost something far more important than an AI contract.

Cards on the table

I want to be clear about where I stand.

What Trump did to Anthropic is vindictive, unlawful, and according to Yale’s top constitutional lawyers, unconstitutional. “Fired like dogs” isn’t the language of a leader navigating a difficult policy question. It’s the language of a bully who got told no and decided to make an example.

Anthropic built ethical principles into their technology from the start. They stood by those principles when the most powerful government on earth told them to back down. They refused to allow their AI to be used for mass surveillance of American citizens or for autonomous weapons that kill without human oversight. And for that, they’ve been branded a security risk, threatened with criminal prosecution, and publicly humiliated by the President.

That’s not Silicon Valley ideology. That’s backbone.

As someone who served in the British Army, I understand national security. I understand the need for capability, for operational advantage, for staying ahead of adversaries who don’t share your values. But I also understand something else. When the people in charge start punishing those who question their orders, when “why?” becomes a career-ending question, when obedience is the only acceptable response, you’ve got a much bigger problem than AI governance.

You’ve got a problem with power. And who’s wielding it.

Only 30% of the American public supports autonomous weapons. 63% don’t trust the government to regulate AI. 65% don’t trust AI companies to self-regulate. And the one company that was trying to bridge that trust gap just got “fired like dogs” by the President.

If that doesn’t worry you, you’re not paying attention.

Dan Cartwright is the founder of ScopeSite Digital Studios, a veteran-owned web development agency in Somerset, UK. ScopeSite specialises in AI visibility and Answer Engine Optimisation (AEO). Dan served in the British Army and is a member of the Armed Forces Covenant.

Sources

1. Trump says he fired Anthropic ‘like dogs’ as Pentagon formally blacklists AI startup | The Guardian, 5 March 2026

2. The War on Anthropic: Pretextual Designation and Unlawful Punishment | Just Security (Koh, Swartz, Gupta, Worthington), 6 March 2026

3. Anthropic vs. The Pentagon: AI Ethics Collide With Government Power | The Daily Economy

4. Anthropic Statement on Comments by the Secretary of War | Anthropic, February 2026

5. Anthropic raises $30 billion Series G funding at $380 billion valuation | Anthropic, February 2026

6. US Government Bans Use of Anthropic Products | Taft Law

7. FY2025 Defence Budget Request Overview | US Department of Defence

8. China Military Spending | CSIS

9. 2025 Edelman Trust Barometer: Technology Sector | Edelman

10. Fox News Poll: Voters on AI Regulation | Fox News, February 2026

11. LinkedIn AI Study: Engagement Analysis | Originality.ai

12. Ipsos: Americans on Autonomous Weapons | Ipsos

13. 27 Biggest AI Controversies of 2025-2026 | Crescendo

14. Anthropic’s Break With the Pentagon Ignites AI Ethics Debate | NC Register

15. UK Defence Artificial Intelligence Strategy | UK Government

16. President Trump's Cyber Strategy for America | The White House, March 2026

17. Trump calls for more offensive cyber operations in new cyber strategy | Axios, 6 March 2026