The Morning Of

Now, on the morning of 16 April this year, in the digital equivalent of the Frankfurt Motor Show, Anthropic pulled back the silk dust sheet on what it solemnly described as the most capable artificial intelligence model ever built by human hands. There was the obligatory PR bunting. There were the benchmark numbers, hammered into glossy slides like commemorative plaques on a yacht: 87.6% on SWE-bench Verified, up from 80.8%, the highest score any agentic coding model has ever achieved. There was a 90.9% on the BigLaw Bench, as scored by Harvey AI, which the press release described as “the highest score of any Claude model to date” with the kind of breathless reverence usually reserved for a new Emirates business lounge or the discovery of a previously unknown internal organ. Claude Opus 4.7, ladies and gentlemen, was here.

It was going to change everything. It was, said Anthropic, “more tasteful and creative when completing professional tasks.” It would code autonomously across multiple files for hours at a stretch. It saw the world in 3.75 megapixels. It would, the launch implied, write your tax return, draft your divorce papers, and possibly, given a long weekend and a strong tailwind, sort out the Middle East.

And then, almost exactly seventy-two hours later, the entire thing fell off its plinth.

Within three days of the most triumphant model release in Anthropic's history, the developer community had decided, more or less unanimously, that the new model was not better. These were the actual paying customers, mind. The people who had been promised a digital Concorde and were instead handed a Reliant Robin with a flat tyre.

A Serious Regression

It was, in the words of one Reddit post that quickly accumulated 2,300 upvotes and a pile-on of agreement deep enough to drown a small spaniel, “a serious regression, not an upgrade.” A separate post on X went viral, garnering tens of thousands of likes and informing the world that it was “not an improvement.” Pro subscribers reported hitting their rate limits after just a handful of prompts. A handful. On the most expensive model on the market. The CEO of TrustedSec, Dave Kennedy, who used to work for the National Security Agency and is therefore not, by any reasonable measure, a man given to dramatic outbursts, declared in Fortune that the code quality was “over 47.3% worse” than at launch. “Really bad,” he said. “Unusably bad.” This is the AI equivalent of opening the bonnet of your brand-new flagship saloon and discovering, by way of welcome, that the engine is on fire.

So, either thousands of professional developers, security researchers, and enterprise customers have all simultaneously taken leave of their senses and decided to bully a perfectly good piece of software for sport, which seems statistically unlikely, or something has gone very seriously wrong inside Anthropic. This article is about what.

Back To The F***ing Past

To understand quite how spectacularly Anthropic managed to set fire to its own stable in seventy-two hours, you have to go back two months. Back to early February. Back to a time when, frankly, Claude Opus 4.6 was a thoroughly excellent piece of kit. It scored 65.4% on Terminal-Bench 2.0, which was a record. It came with a one-million-token context window, which is roughly enough digital memory to ingest the entire works of Tolstoy, the King James Bible, and the assembly instructions for an IKEA Pax wardrobe, all at once, and still have headroom left over to remember your dog's name. Reviewers swooned. Developers, who as a species are slightly less prone to swooning than golden retrievers, came as close to swooning as developers ever do. Everything was, briefly, marvellous.

And then.

And then, sometime between late February and the middle of March, while exactly nobody was looking, the model started to change.

Not officially, you understand. Oh, no. Officially, absolutely nothing had happened. The version number was the same. The pricing was the same. The Anthropic homepage was wittering away about its exciting new features, just as it had been the day before. But out in the field, where actual human beings were trying to do actual work, things had begun to go very strangely indeed. Prompts that had worked on Tuesday were failing on Wednesday. Code that the model had cheerfully written for weeks was now arriving mangled, half-finished, or not at all. Multi-step instructions, which are what these things are supposed to do, were being followed for the first three steps and then unceremoniously abandoned, the digital equivalent of hiring a builder, watching him put up the first wall of an extension, and then discovering at five o'clock that he has buggered off to the pub and is not, in fact, ever coming back.

And then came the truly damning bit. The bit, frankly, which should have ended several careers. Tests that the previous generation, Claude Opus 4.5, had still passed were now failing by the supposedly newer, supposedly better, supposedly more advanced 4.6. Read that again. The old model was outperforming the new one. The model that had been gracefully retired to the digital pasture, ready for a comfortable old age of being entirely forgotten about, was beating the very thing meant to replace it. And nobody at Anthropic, at any level, in any office, on any continent, on any planet in the solar system, had thought it might be the slightest bit polite to mention this to the people paying for it.

The Goings On

What had actually happened, as the analyst MindStudio later confirmed after weeks of public bellowing, finally extracted something approximating a confession out of San Francisco, was a “post-launch safety fine-tuning update” that had “produced unintended regression in agentic instruction following.” Now, that is a sentence written by a man with a mortgage and a legal team. Translated into English, it means this: somebody at Anthropic, at some point, had reached into the engine bay of a car they had already sold you, fiddled with the timing belt, almost certainly broken the carburettor, possibly removed a valve or two for good measure, and then surreptitiously closed the bonnet again. No service record. No phone call. No apologetic letter on headed paper. Nothing.

Now. I want you to imagine, just for one moment, that Volkswagen had done this. Imagine that Volkswagen, in the dead of night, had reached into the ECU of every single Golf currently on the M25 and silently degraded its performance by twelve percent. Imagine the parliamentary inquiries. Imagine the German chief executive being frog-marched out of a glass-fronted building somewhere in Wolfsburg with a tweed jacket thrown over his head, while assembled photographers howled questions about the wider implications for international trade. Imagine the class actions. Imagine the apologetic press conferences. Imagine, in short, the apocalypse.

But because this is software, and because we have all collectively decided that software exists in some legally exempt parallel universe in which the normal rules of basic commercial decency simply do not apply, Anthropic got away with it. They got away with it, in fact, for about three weeks. Until 16 April. When they launched Opus 4.7. And every developer who had spent March aggressively tearing out their hair, suddenly, all at once, simultaneously, had a very good reason to start comparing notes.

Project Glasswing & the Two-Tier Internet

Now. Before we get to what is wrong with Opus 4.7, you need to understand that what is wrong with Opus 4.7 is, in a very real sense, deliberate. It is not, as some people on the internet have been claiming, the result of Anthropic accidentally setting fire to its own server farm, or being secretly throttled by a cabal of Saudi accountants in a Geneva basement, or of the Russians having got at it. No. It is, instead, the consequence of something rather more remarkable, which Anthropic announced on 7 April with an absolutely straight face and called Project Glasswing.

Stay with me. This is going to get absurd.

Project Glasswing is, ostensibly, a noble cybersecurity initiative. Anthropic, you see, had been training, in some Bay Area basement, a model called Claude Mythos Preview. And Claude Mythos Preview is, to use the technical term, a complete monster. According to Anthropic's own marketing materials, the thing had discovered thousands of zero-day vulnerabilities. Thousands. Plural. Although it later transpired, when somebody at Spiceworks did the actual maths, that this magnificent four-figure number had been extrapolated from a sample of just 198 manually reviewed reports. Which is the digital equivalent of eating one Rich Tea biscuit, declaring yourself an expert in the entire field of confectionery, and then flying business class to TED to give a 40-minute talk on the subject.

Anyway. Mythos was so terrifying, allegedly, that Anthropic decided it could not, in good conscience, release it to the public. So, they didn't. Instead, they handed it out to a club. A club of approximately fifty-two organisations: AWS, Apple, Microsoft, Google, JPMorganChase, CrowdStrike. The sort of companies that already have private jets, in-house chefs, and a separate butler for their cufflinks. These chosen few, the digital nobility, would get to use the actual good model. Everyone else, the rest of the entire human race, every developer in a one-bedroom flat in Slough, every startup founder, every freelancer, every cybersecurity researcher who is not on first-name terms with the chief information officer of Cisco, would get the deliberately constrained one. They would get Opus 4.7. Which Anthropic, in a System Card document presumably written through clenched teeth, openly described as “less broadly capable” than Mythos.

And just in case any of the peasants got uppity about all this, there was, of course, a velvet rope. They called it the Cyber Verification Program. Through which, if you were a security researcher with the right credentials and the right paperwork and presumably the right accent, you might apply to Anthropic, and Anthropic might, in their infinite wisdom, deign to grant you slightly less crippled access to the model you were already paying full price for. Which is roughly equivalent to McLaren selling you a brand-new 720S, then abruptly removing two of the cylinders before delivery, and inviting you to fill in a 47-page form, in triplicate, with a wax seal, to prove that you are worthy of having them put back in.

This, ladies and gentlemen, is the world we now live in.

The AUP False-Positive Firestorm

When The Register, a publication generally about as easy to scandalise as a long-serving Glasgow taxi driver, ran a piece on 23 April calling Claude Opus 4.7 an “overzealous query cop,” they were, if anything, being polite. Because what had actually started happening, in the days following the launch, was that the model had begun behaving like a particularly officious traffic warden in a small Cotswolds village. The kind who carries a clipboard everywhere, even to the pub. The kind who issues you a parking ticket while you are in the act of unloading your dying grandmother from the car. The kind, in short, who has confused civic duty with low-grade personal sadism.

Consider, for a moment, the case of Professor Golden G. Richard III. Professor Richard is not, as far as anyone can tell, a hardened cybercriminal. He runs the LSU Cyber Centre. He is the author of a textbook called Cybersecurity in Context. And on a perfectly ordinary day in April, while paying Anthropic in excess of $200 a month for the privilege, he asked Claude to proofread a lab exercise from his own textbook. A lab, mind. Containing crypto exercises so basic that they would not, frankly, alarm a moderately bright sixth-former. Claude refused. Flat refused. Declared the entire enterprise too dangerous to be associated with. Professor Richard, with admirable restraint, described the experience in GitHub issue #50916 with a single word. “Absurd.”

He was not alone. According to data compiled by analyst Karol Zieminski and corroborated by The Register, the number of Acceptable Use Policy complaints filed on GitHub had previously been running at a sedate two to eight per month for the best part of a year. In April 2026 alone, the figure rocketed to thirty-plus. Computational structural biologists, the kind of people who study how proteins fold for a living, were having their queries rejected. Cybersecurity educators were having their own textbook chapters declared contraband. And in what may be the single most preposterous detail of this entire saga, Russian-language users were being flagged simply for typing in Russian and not asking for anything dodgy and just typing in Russian. Apparently, the model, trained presumably on a diet of bad Cold War thrillers, had concluded that the Cyrillic alphabet itself constituted reasonable suspicion.

But the absolute crowning touch, the thing that elevates this from a mere policy embarrassment into a genuine work of corporate Dadaism, is the matter of the exemptions. Because Anthropic, recognising on some level that all of this nonsense was costing them paying customers, had introduced something they called the Cyber Use Case Exemption. Get one of these, and your account will be officially marked safe. The classifier, in theory, would step back, tip its hat, and let you crack on. There was, however, a small wrinkle. The exemptions, once granted, applied only to Claude Chat. They did not propagate to the API. They did not propagate to Claude Code. So a legitimate security researcher could be officially blessed by Anthropic head office, certified non-villainous, granted full clearance through the front door, and then discover that their actual professional work tools were still refusing to speak to them. It is the digital equivalent of being handed a key to the executive washroom, only to find, on arrival, that the toilet itself has filed a restraining order.

This, as The Register observed with the kind of dry understatement that the British press still does better than anyone else on Earth, is what happens “with greater security.” It is the story of an AI that has become, in its words, “overcautious, refusing to respond to harmless requests.” It is, more accurately, the story of an AI that has been issued with a tin hat and a whistle, and which now stands, twitching, at the edge of every conversation, ready at any moment to sound the alarm because somebody, somewhere, has just dared to ask it a question about cryptography.

The Stealth Tokeniser Price Hike

Now then. While the model was busy refusing to help university professors edit their own textbooks, Anthropic had also, somewhere in the background, embarked on a quite spectacular feat of corporate accountancy of the sort which would, in any other industry, get you a tabloid front page and a select committee summons before lunch.

The thing you have to understand is that large language models bill you by the token, which is the AI equivalent of being charged by the metre, except that the metre in question is invisible, has no fixed length, and is calibrated entirely by Anthropic, in San Francisco, in private. It is, conceptually, a black cab meter. And what Anthropic did, between Opus 4.6 and 4.7, in a manoeuvre which I can only describe as audacious, was to swap the meter.

The price-per-token, advertised loud and proud at five dollars per million in and twenty-five dollars per million out, did not move. Not by so much as a cent. Oh no. The meter itself, however, the small mechanical bit doing the actual counting, had been retrofitted mid-flight with a brand-new module which, according to the analyst Karol Zieminski, was now generating up to one and a third times more tokens for the same English you had fed into it. Code-heavy work was hit hardest. JSON, structured data, the bread and butter of every working developer alive, all of it metabolised by the model at a 35% premium for an absolutely identical request.

Which is, by every conceivable definition, a functional price increase. And the fact that Anthropic has refused to call it a price increase, has refused to write a press release announcing the price increase, has refused, in fact, even to acknowledge in plain English that anything has changed, does not, I am afraid, make it any less of one. The Business Insider readership clocked it inside an afternoon. Pro subscribers, paying two hundred quid a month for the privilege, were filing reports of slamming face-first into the rate limit after three questions. Three. Three questions, and the digital cab pulls over, tells you to get out, and leaves you walking home in the rain.

This is the AI equivalent of walking into your local on a Friday evening, ordering your usual pint, and discovering that the landlord, while keeping the menu price absolutely unchanged, has fitted every glass behind the bar with a clever new false bottom which holds an inch and a quarter less beer than it did last week. And then, when you complain, the landlord folds his arms, looks you dead in the eye, and tells you, with the absolute seriousness of a man who fully intends to be believed, that nothing whatsoever has changed.

Look. Do not take my word for any of this. Take Fortune's. Because Fortune, on the matter of Opus 4.7, summed it all up with the sort of dry brevity that turns a perfectly good observation into a properly published verdict. The whole launch, the magazine concluded, “is not a meaningful upgrade. It is the pre-nerf build of 4.6 dressed in a higher model number, slipped out the door with a tokeniser change that functions as a stealth price hike.” Fortune's words. Not mine. A stealth. Price. Hike.

The 47 Percent

And now we come to the bit which is, frankly, funny. Funny in the way that a man slipping on a banana skin outside the headquarters of the International Banana Safety Council is funny. Because the entire reason Anthropic locked Claude Mythos Preview in a cellar, the whole justification for the cybersecurity safeguards, the Cyber Verification Program, the digital velvet rope, the lot, was that Mythos was too good at finding security vulnerabilities.

The unreleased model, in their telling, was so dangerously brilliant at sniffing out flaws in code that it could not be unleashed upon the wider world. Right. Hold that thought. Because Dave Kennedy, who runs the cybersecurity firm TrustedSec, who used to work for the National Security Agency, and who is therefore the sort of man whose professional opinion on the quality of a security-relevant tool ought to count for something, has been tracking the code quality of the publicly available Opus 4.7 since launch.

His verdict, delivered to Fortune in late April, was the sort of sentence that ought to have caused every senior executive at Anthropic to choke on their oat-milk flat white. “Right now,” he said, “from five weeks ago to today, the code quality is over 47.3% worse than when it was first released.” “Really bad,” he added. “Unusably bad.” Forty-seven point three percent worse. From a former NSA analyst. Speaking on the record. To Fortune. About the publicly available, civilian-grade, deliberately constrained version of the model that Anthropic has decided is the safe one to ship to the rest of us.

So, to recap. The company that was so terrified of one of its models being capable of finding security vulnerabilities that it locked the model in a cellar, restricted access to fifty-two hand-picked corporate partners, and demanded a wax-sealed application form before letting anyone else even glimpse it, has, instead, shipped to the wider public a different model whose code quality has, according to one of the most respected practitioners in cybersecurity, actively gone backwards by nearly half since the day it launched. The irony is so dense it has its own gravitational field.

And this, before we even get to what Anthropic did to the controls. Because while all this was going on, the engineers in San Francisco had also been busy pulling the steering wheel off the dashboard.

Opus 4.7, you see, ships with a brand-new “effort level” system, the upper end of which is now called xhigh, sitting between high and max, and which is, on Claude Code, the new default for all paying customers. Which sounds reasonable until you read the small print and discover that what was previously called high effort is now, functionally, the new medium, which means every single paying customer is, in real terms, being silently upshifted into a higher gear and burning more fuel for what is allegedly the same journey. The settings that used to let you tune the engine yourself, the temperature, the top_p, the top_k, the entire family of parameters every developer has been using since approximately the dawn of time, have been removed.

Try to use them, and you do not get a polite warning. You get a 400 error. The reasoning traces, the running commentary the model used to produce showing you its working, are now hidden by default. You ask the question, you wait, and then the answer arrives, with no indication of whether the model has thought about it for 40 seconds or just guessed. It is the AI equivalent of buying a new BMW, opening the door, and discovering that the steering wheel, the gearstick, the pedals, the rev counter, and the speedometer have all been replaced by a single button marked 'trust us'.

In Fairness

Now, it would be unsporting of me, having spent the last several thousand words administering a quite thorough kicking, not to give Anthropic at least one fair hearing. So here it is. The defence brief. Delivered, on the record, in as charitable a register as I am prepared to manage at this hour.

Anthropic genuinely did have a serious problem on its hands. Claude Mythos Preview, by their own admission, was an extraordinarily capable security tool, and unleashing it onto the global internet without safeguards would have been the digital equivalent of handing every angry teenager in Coventry a working flamethrower. So they didn't. That, on its own, is a defensible call. The benchmark gains on Opus 4.7 are also entirely real. 87.6% on SWE-bench Verified is the highest agentic coding score the public has ever seen. The 90.9% on the BigLaw Bench is, according to Harvey AI, the best contract-reviewing performance any Claude model has ever produced. The vision improvements are, by any reasonable measure, extraordinary.

Nor is it just the official benchmark sheet that is favourable. Amjad Masad, the chief executive of Replit, has publicly stated that, for the work his users do every day, Opus 4.7 achieves the same quality as 4.6 at a lower cost, and that, in his words, “it feels like a better coworker.” The team at Cursor, who run their own internal evaluation called CursorBench, reported that the new model jumped from 58% to 70%, which is technically known in the industry as an absolute belter. Anthropic's own chief executive, Dario Amodei, has stated for the record, in writing, in his company's official 23 April postmortem, that “we never intentionally degrade our models.” Anthropic's engineers published a postmortem acknowledging at least some of what had gone wrong with the launch, which, by Silicon Valley standards, frankly counts as honesty.

There. That is the case for the defence. As thorough an account as I am prepared to give. The chief executive has spoken. The chief executives of major partner platforms have spoken. The benchmarks have been honestly compiled and are, by any reasonable standard, impressive. And now, having heard all of it, having weighed it, and having considered it in the round, it is, I am afraid, time to move on to the verdict.

Tuning Sprints

We have, at the time of writing, a flagship artificial intelligence model from a company valued at the better part of three hundred billion dollars which (a) was launched with a benchmark fanfare loud enough to wake the dead in three separate counties, and which (b) within seventy-two hours had its own paying customers describing it on Reddit, on X, on GitHub, on Substack, on Medium, on YouTube, and indeed on every other digital pulpit a furious developer can climb up onto, as a serious regression, which (c) had been trailed by a previous model that had itself been silently degraded mid-cycle by a safety update that nobody at Anthropic could be bothered to put in a changelog, and which (d) now refuses to proofread textbooks written by tenured American professors who have paid for it, refuses to assist computational structural biologists who study how proteins fold for a living, refuses to talk to Russian-language users on the apparent grounds that the Cyrillic alphabet is now itself a national security threat, and refuses, frankly, to do quite a lot of the things it was specifically built to do, while at the same time (e) generating up to 35% more tokens for the exact same input thanks to a brand-new tokenizer that nobody asked for and which Anthropic has steadfastly refused to describe as a price increase even though it is, by every reasonable measure, a functional one, and which (f) burns through Claude Pro rate limits in a handful of prompts, the same number of questions a moderately curious six-year-old gets through before pudding, and which (g) according to a former NSA analyst now running one of the more respected cybersecurity firms in the United States, has produced code which is now over 47.3% worse than at launch, despite being the deliberately constrained civilian-grade derivative of the model Anthropic locked away precisely because that one was too good at finding security holes, and which (h) has had its actual control parameters surgically removed by the engineers and replaced with a hidden adaptive thinking system, and which (i) has had its problems characterised by the company's own product manager, in writing, on a public platform, as a matter requiring further “tuning sprints,” which is the sort of phrase a man reaches for when the words he actually means are “we have, at this precise moment in time, absolutely no idea what we have done here, and would the ladies and gentlemen of the developer community kindly bear with us.”

And the verdict on all this, from the developers, the analysts, the security researchers, the columnists, the academics, the cybersecurity educators, the long-suffering Pro subscribers, and indeed every single soul who has been forced to use the thing in anger since 16 April That something has gone catastrophically, comprehensively, and quite possibly historically wrong inside Anthropic. It is, in that very specific way that only a Silicon Valley megacorp with a thirty-billion-dollar AWS contract can manage, the most expensively, theatrically, magnificently misjudged model launch in the entire short and increasingly hilarious history of artificial intelligence.

In the world.

A Note From The Author

Right. Time to take the Clarkson hat off for a moment and speak as myself.

I run a small studio in Somerset called ScopeSite Digital Studios. We build websites and AI visibility systems for professional services firms. We use Claude Opus on the Max plan every single day to do the actual work, which is precisely why I have spent the past week paying very close attention indeed to what Anthropic has been doing to it. When the model breaks, we feel it. When the bills go up, we feel that too. This is not abstract for us. It is the digital toolkit we earn our living with.

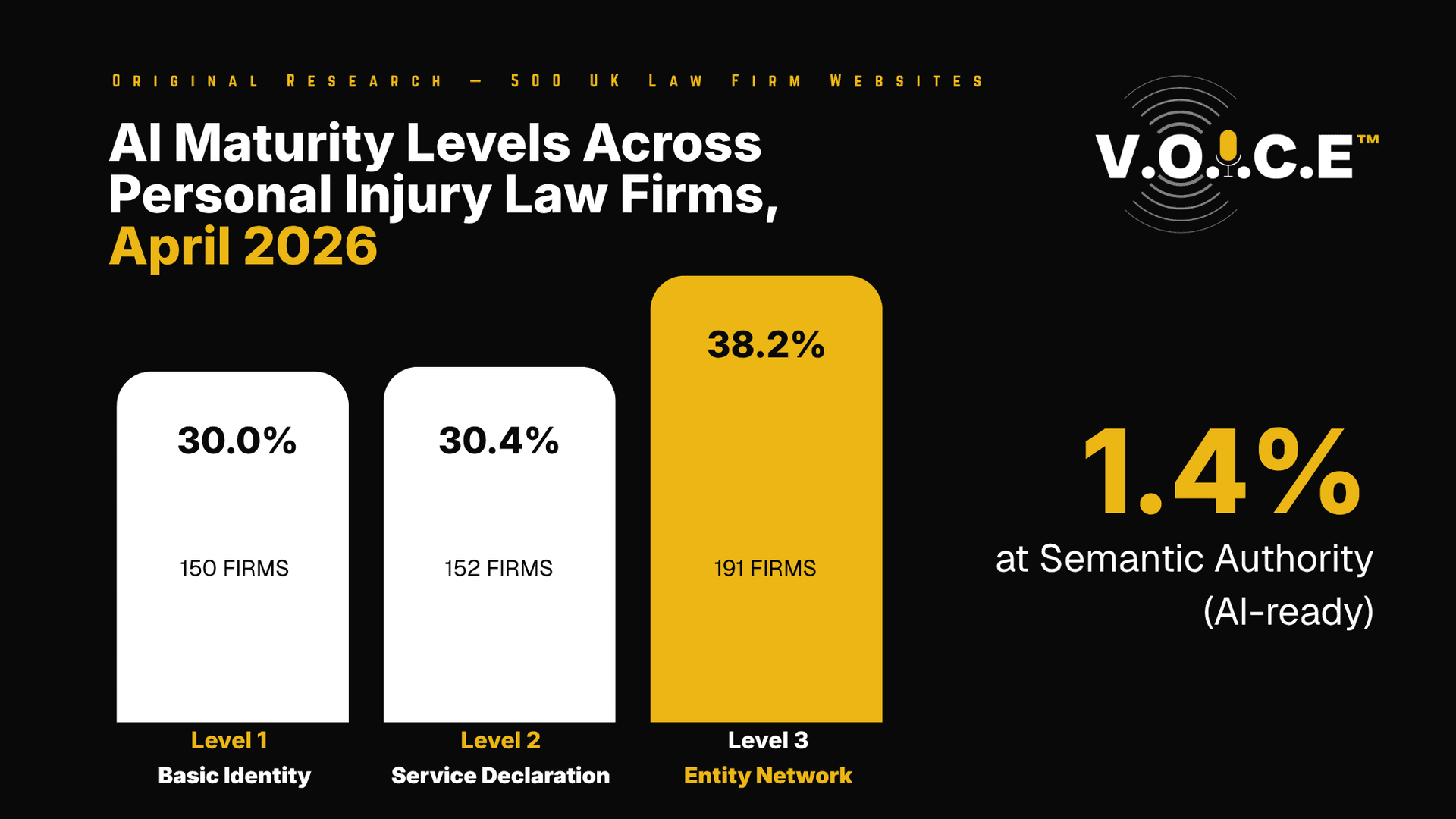

ScopeSite exists because the average website built by the average marketing agency in the United Kingdom is, frankly, not fit for the era we are now in. AI search engines are eating Google's lunch. ChatGPT, Perplexity, and Claude itself are now the way professional buyers find solicitors, accountants, dental practices, IFAs, and estate agents. They are not crawling websites the way Google used to. They are looking for structured data, entity signals, and authoritative citations. Most websites in the United Kingdom have none of those things. We build the ones that do.

A few specific bits of kit we offer, since we are already here:

V.O.I.C.E is our methodology for getting your firm cited by AI assistants when prospects ask about the services you offer. Schema-first architecture, entity-based SEO, the lot. Built specifically for the era of AI-driven search rather than retrofitted from old SEO playbooks. Details at scopesite.co.uk/voice.

LLM Brain is a productised AI set up we offer to small business owners who want to actually use AI tools properly, rather than just having a ChatGPT subscription that nobody opens. £250 set up, or £150 if you use the promo code BRAINLAUNCH26, and £85 a month managed. It is what I personally use to keep my own head straight, which probably says more than the marketing copy ever will. Available at scopesite.co.uk/llm-brain.

Server-side rendered Next.js websites are what we build when a firm needs an actual modern web presence rather than the WordPress hand-me-down their nephew set up in 2019. Faster than what most agencies build, and properly schema'd for the AI agents that are now doing the heavy lifting on search. Available at scopesite.co.uk/web-design.

ScopeSite is veteran-owned and run from Beckington, Somerset. Military precision. Zero bullshit. If any of that sounds like it might be useful to you, or to someone you know currently being hosed for four grand a month by an agency producing nothing of consequence, the main website is at scopesite.co.uk. The local site for Somerset firms is fromewebdesign.com. Pick whichever looks more your speed.

Sources & References

This piece draws on Anthropic's own announcements and engineering postmortem, independent technical analysis, established trade-press coverage, and primary community reports filed on GitHub, Reddit, and X. Sources are grouped below by category. Where multiple outlets covered the same event, the most authoritative or original source has been cited.

Anthropic Official Sources

Claude Opus 4.7 launch announcement, 16 April 2026: https://www.anthropic.com/news/claude-opus-4-7

What's new in Claude Opus 4.7 (effort levels, vision, tokeniser): https://platform.claude.com/docs/en/about-claude/models/whats-new-claude-4-7

Migration guide (deprecated parameters, 400 errors): https://platform.claude.com/docs/en/about-claude/models/migration-guide

Project Glasswing announcement: https://www.anthropic.com/project/glasswing

Claude Mythos Preview disclosure: https://red.anthropic.com/2026/mythos-preview/

Engineering postmortem on Claude Code quality reports, 23 April 2026 (Amodei quote: “we never intentionally degrade our models”): https://www.anthropic.com/engineering/april-23-postmortem

Independent Benchmarks & Analysis

LLM-Stats benchmark analysis of Opus 4.7 launch: https://llm-stats.com/blog/research/claude-opus-4-7-launch

Harvey AI BigLaw Bench result (90.9%): https://www.harvey.ai/blog/opus-4-7-now-live-in-harvey

Karol Zieminski's analysis on tokeniser cost inflation (1.0–1.35x): https://karozieminski.substack.com/p/claude-opus-4-7-review-tutorial-builders

MindStudio analysis on the Opus 4.6 mid-cycle regression: https://www.mindstudio.ai/blog/was-claude-opus-4-6-nerfed-what-happened

Trade Press Coverage

The Register on AUP false positives and the “overzealous query cop” characterisation: https://www.theregister.com/2026/04/23/claude_opus_47_auc_overzealous/

Business Insider on the developer backlash and tokeniser cost shock: https://www.businessinsider.com/anthropic-claude-opus-4-7-backlash-tokens-2026-4

Fortune on the Dave Kennedy 47.3% code-quality verdict and the “stealth price hike” framing (paywalled): https://fortune.com/2026/04/22/anthropic-claude-opus-4-7-code-quality-trustedsec/

Where's Your Ed At on the wider AI launch backlash: https://www.wheresyoured.at/four-horsemen-of-the-aipocalypse/

Benzatine on the Opus 4.7 enthusiasm-to-discontent shift: https://benzatine.com/news-room/opus-47-enthusiasm-turns-to-discontent-over-ai-models-performance

DigitalToday on the post-launch backlash and rate-limit complaints: https://www.digitaltoday.co.kr/en/view/48976/anthropic-claude-opus-47-faces-backlash-after-launch-over-performance-and-token-costs

Community Sources

GitHub issue #50916, Professor Golden G. Richard III's blocked cryptography lab: https://github.com/anthropics/claude-code/issues/50916

Related GitHub issues on AUP false positives (Russian-language inputs, structural biology, API exemption failures), referenced in The Register: https://www.theregister.com/2026/04/23/claude_opus_47_auc_overzealous/

Reddit r/ClaudeCode community analysis on effort-level capability curve: https://www.reddit.com/r/ClaudeCode/comments/1snun4e/everyones_hyping_opus_47_nobodys_talking_about/

Reddit r/ClaudeAI “serious regression” thread (2,300 upvotes), aggregated via Where's Your Ed At and Benzatine coverage above.

Positive Industry Testimony (cited in In Fairness)

Amjad Masad (Replit CEO) endorsement of Opus 4.7 efficiency, cited via Anthropic launch press materials: https://www.anthropic.com/news/claude-opus-4-7

Cursor CursorBench evaluation (58% → 70%), cited via the same Anthropic launch materials.

All sources accessed and verified between 23 and 26 April 2026. Where claims rely on proprietary internal tools (notably the TrustedSec 47.3% code-quality figure), the figure is presented as the analyst's own published statement to Fortune rather than as an independently reproducible benchmark. The article's central factual claims have been cross-referenced across at least two independent sources before publication.